Executive Summary of my Question

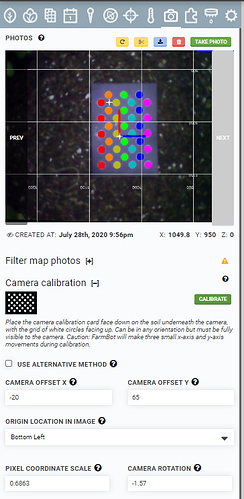

Updating my question. After further experimentation, if I use a default FarmBot camera calibration (i.e. using the calibration card and specifying an object distance of 100 mm), I get a pixel coordinate scale of .6154 and the pictures alone the X axis align well – but not the Y axis. If I fake out the calibration and tell the calibration routine the red dots are only 66.25 mm apart, I get a pixel coordinate scale of .4062. In that case, the alignment along the Y axis is good but not along the X axis.

What strikes me is that the camera resolution is 0.3 MP which is 640 x 480. The calibration routine doesn’t try to figure out the camera orientation with respect to which is 640 and which is 480. Is that the root issue here?

I’m somewhat stumped. I feel like I have a good process in terms of a golden image with tight detection. But I don’t see how to get my pictures overlapped correctly in both dimensions.

Details for the Curious Including Some Tips (ignore this if you are not curious)

I have a Genesis 1.5. If you are just getting started with camera calibration, it has two goals

- Determine the orientation the camera is hanging. Think of this as 0 degrees to 360 degrees in relationship to the origin of your FarmBot.

- Determine how the camera image size relates to the physical world – how many pixels do you have per mm of physical space at a given height.

In order to do this, they give you a nifty card with two red circles that are exactly 100 mm apart. The card has 4 quadrants and one of them has a home icon. If you situate the card such that the home icon is toward your origin and you take a picture, then you can a) tell the orientation and b) knowing that the dots are 100 mm apart, you can tell how many pixels gives you a mm.

I have some tips I’d like to share that I used.

- Before doing anything with the calibration settings (i.e. with all calibration data cleared), go out and put your Farmbot near a corner edge of your bed.

- Take a picture and examine the orientation of the camera to try to get the camera aligned with your bed – in other words, with no calibration settings applied, you want the camera to look down squarely on the bed. Doing this will help eliminate problems with images that need to be heavily cropped after rotation.

- Adjust the camera and then take another picture. Repeat until you have good alignment.

Now we are ready to start with the software side. For me, during the day my calibration card (as well as 3d printed red golf tees) were washed out by the sun. I got my best results closer to dusk.

Get a Golden Image

This part is critical. You don’t want to use the calibration function over and over because it takes a new picture each time. With each new picture, you get new variations (especially of light). What you want to do is to end up with a picture that you can scan multiple times and get the same calibration result each time. The importance of this will become clear.

Take a picture and then select “Scan Current Image”. You’ll have to adjust hue, saturation, etc. until you get a good calibration image. You want something like this:

In this image, you can see that the calibration dots were cleanly selected and hence I have high confidence in the final result. If you didn’t get this, then you should adjust your settings (Hue, Value, Saturation) until you can get something like this. It won’t do you any good to go forward until you have a golden image. In my case, the golden image is this:

In other words, it is the raw image without calibration marks.

Fine Tune Your Calibration

I got a great golden image and a clean calibration. But, when I took photos of my entire bed, it was a mess. Images were much larger than they should have been and the FarmBot overlay of tools and seed trays didn’t match the image.

Remember, the calibration is only figuring out 2 things – the rotation and the pixel to real world ratio for a given Z height. Since my images were too big, I determined that I needed to reduce “Pixel Coordinate Scale”. You could adjust the pixel coordinate scale value directly as it is an input box but don’t because you can’t later do Weed calibration – the scale has to be close to what was determined by the calibration routine.

Instead, lie to the calibration routine about the distance between the calibration object points. In my case, if I tell it the objects (the two red dots on the calibration card) are 66.25 mm apart then I scan my golden image, I get a lower pixel coordinate scale.

When I take a photo of a row on my FarmBot, it looks like this:

You can see there is still some room to tweak the angle but things line up pretty well. The question I have is why, when I specified a 100 mm object separation distance, did I get such a bad result.

After further experimentation, when I take this approach, the single row as shown above looks good. However, for an entire bed, things are not aligned. One plant will show up as two plants because the pixel scale is off. I re-ran calibration using the accurate object distance of 100 mm.

Using the truthful object distance and calibrating, the rows above are not aligned but the columns are as you can see below.

I’m not sure this info is going to help at all but I’m getting a bit desperate in trying to troubleshoot this haha. Can you provide:

I’m not sure this info is going to help at all but I’m getting a bit desperate in trying to troubleshoot this haha. Can you provide: